Reflection as a Mechanism of Unintentional Intelligence

Can a system without intention solve problems as effectively as one with it? A survey of reflection mechanisms and the challenges of implementing them in modern LLMs.

In discussions about AGI, no word comes up more often than "consciousness." Some argue that a machine must "achieve consciousness" to truly think — that without subjective experience, without qualia, the sense of "what it is like" to be something, intelligence remains mere imitation. This position draws on a half-century tradition from John Searle, 1980 to David Chalmers, 1995 on the necessity of subjective experience, and it is intuitively compelling.

There is another word — less dramatic but more important for our purposes: "reflection." The simple ability of a system to evaluate its own output, compare it against a criterion, and correct itself. A functional loop possessed by any thermostat, by a DNA polymerase molecule, and — in distributed form — by an ant colony. None of these systems is conscious, yet all of them are reflective. Besides reflection, consciousness includes qualities such as:

- intentionality — the directedness of attention toward a specific object

- integrativity — the capacity to unify disparate signals into a coherent whole

- temporality — the ability to retain the past and anticipate the future over extended durations

- sense of agency — the feeling of authoring one's own actions, the mechanism that distinguishes "I do" from "it happens to me"

Consciousness typically includes reflection as just one of its mechanisms. But reflection is possible outside consciousness, as a pure mechanism of interaction between an object and its environment. Moreover, unlike consciousness, reflection appears at virtually every level of material organization where the system is sufficiently complex. And most of the practical properties we attribute to a "conscious" AGI — planning, self-correction, awareness of one's own limitations — are in fact properties of reflection.

Reflection — a system's ability to evaluate its own output and correct it — requires neither consciousness, nor intention, nor subjective experience. It arises at every level of material organization, from quantum particles to scientific communities. And it is precisely this component of consciousness that is being leveraged today in attempts to build AGI.

To see why, we will trace reflection from the bottom up: from abstract information through inanimate and living matter to collective intelligence.

Cybernetics: The Universal Schema of Reflection

In 1948, Norbert Wiener proposed in his paper Norbert Wiener, 1948 that any self-correcting system consists of at least three elements linked by a feedback loop:

- Receptor / Sensor — a sense organ or detector that captures information about the state of the external environment.

- Comparator — an element that compares the sensor's reading with a target value and computes the difference.

- Effector — an actuator or part of the system that performs an action (e.g., a motor, a muscle, or any mechanism that does work).

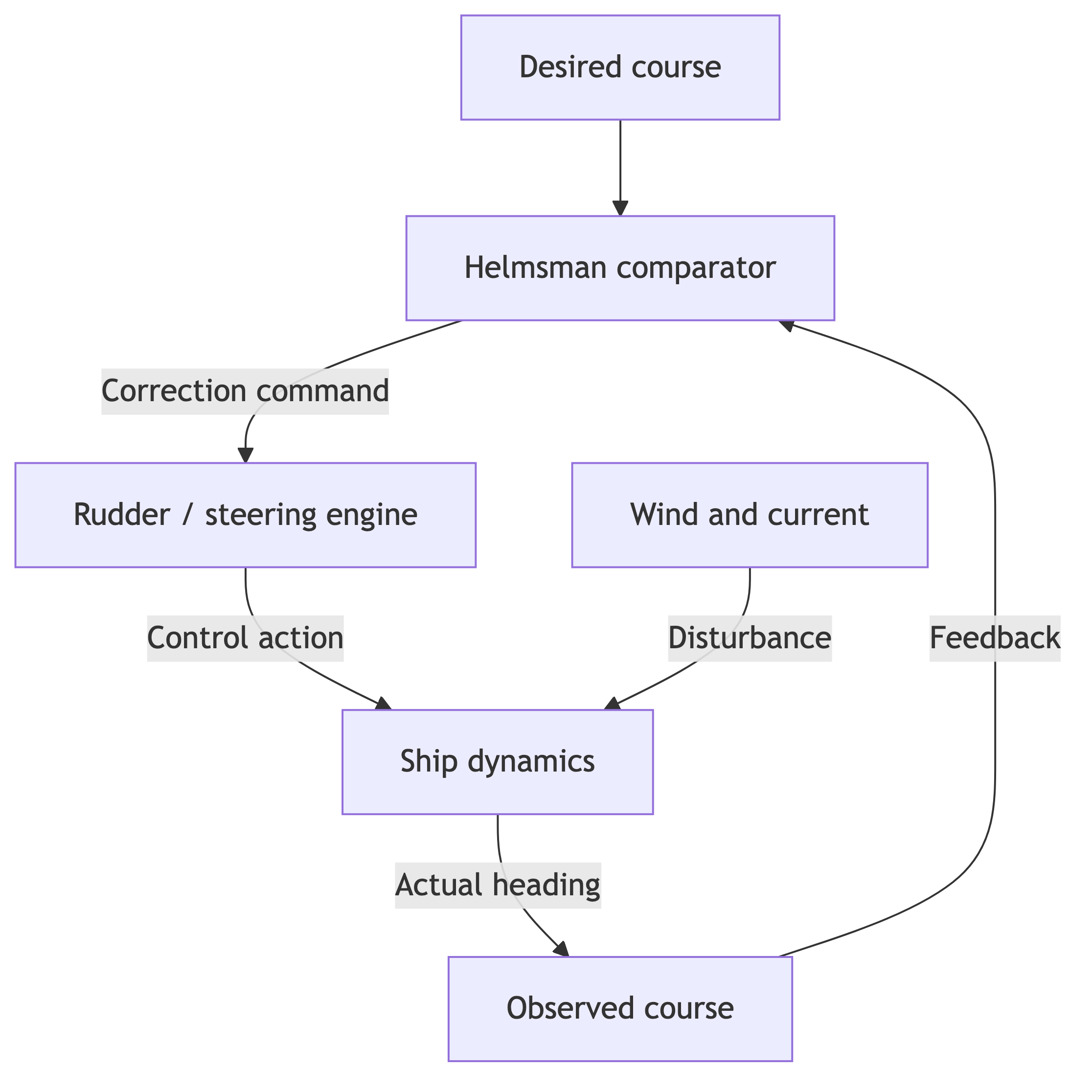

Wiener called the discipline that studies such systems cybernetics — the science of control and communication in living organisms and machines. The word comes from the Greek κυβερνήτης ("helmsman"): like a ship's helmsman, the system continuously compares the current course with the goal and corrects its trajectory (Fig. 1).

For Wiener, control alone was not enough — communication mattered just as much: the system must not merely act but transmit the error signal between its components. At the same time, feedback is useful only as long as it does not drive the system into oscillations and instability. In other words, reflection emerges as the result of a loop that keeps the system in a stable operating regime. This is what creates the impression of a "smart system" — one that adapts to external conditions.

Wiener's schema — sensor, comparator, effector — is a universal language for describing any form of self-correction. Now let us trace how this pattern manifests at each level of material organization, starting with the most abstract.

Level 1. The Abstract: Self-Correction of Pure Information

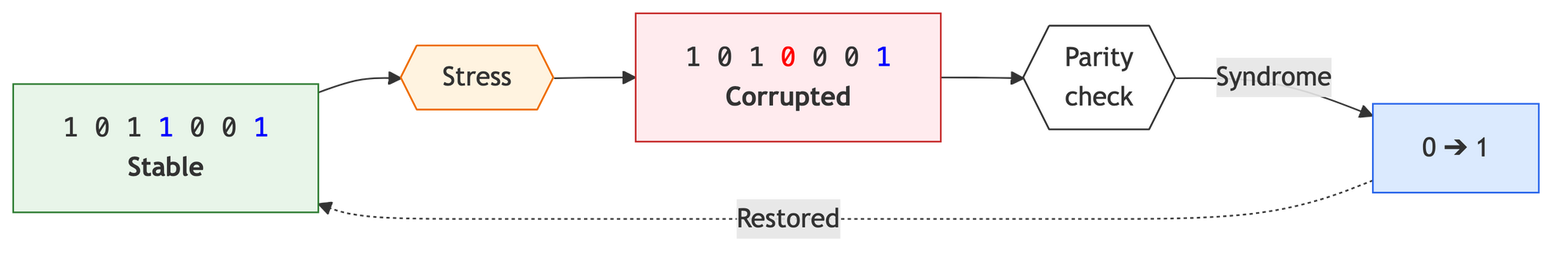

The purest example of reflection is one involving neither matter nor life — just information defending itself against chaos. Hamming codes (Fig. 2) are a practical example of "digital homeostasis." Special parity bits are added to useful data, each monitoring a specific group of bits. If an external disturbance — say, interference in a cable — flips a single data bit (0 → 1), the system instantly "senses" the problem:

- Stress (Disturbance): External noise inverts one bit in the sequence. In the diagram, this is the transformation of a "one" into a red zero at position 4. The system transitions from a stable state to one containing an "information defect."

- Response (Comparator): The parity bits, embedded in the data structure, "detect" the anomaly. A mathematical algorithm performs a parity check: the checksums no longer match, producing a nonzero error "syndrome."

- Correction (Effector): Based on the syndrome, the system computes the exact coordinate of the failure. At the intersection of the parity bits' responsibility zones, an inverse operation is triggered: inversion 0 → 1. The loop closes, restoring the data block to its original stable state.

Wiener's pattern works in the realm of abstractions: state → disturbance → correction. Hamming codes are the simplest case, correcting a single bit. In real information systems, the same principle scales into multi-layered protection: Reed–Solomon codes restore entire data blocks on damaged CDs and DVDs; the TCP protocol retransmits lost packets using checksums and timeouts; RAID-6 allows a disk array to survive the simultaneous failure of two drives. In every case, information protects itself through redundancy — a reflective loop embedded in the structure of the data.

Level 2. Inanimate Matter: Reflection Without a Designer

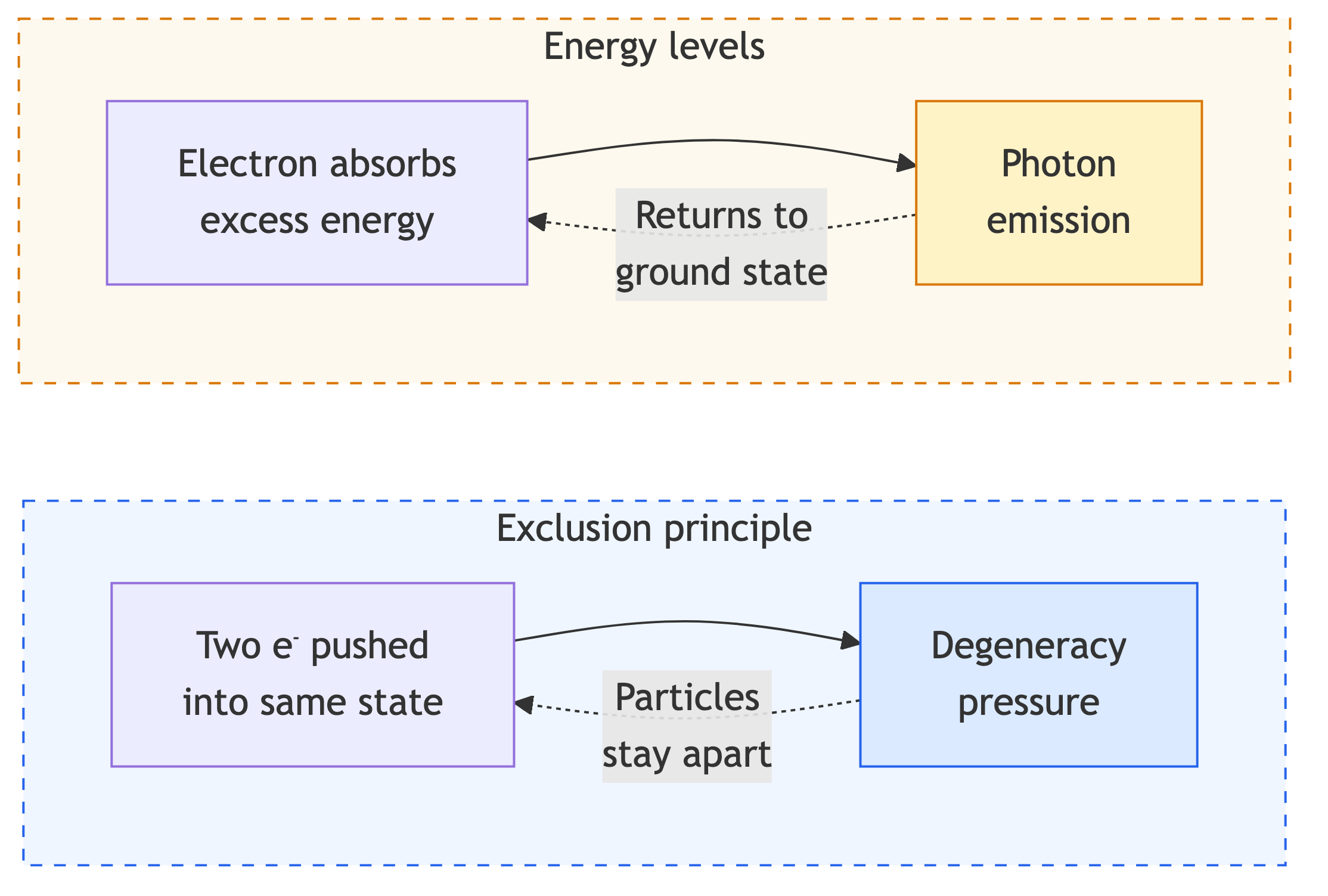

Quantum reflection: a single particle. At the quantum level, feedback-like mechanisms appear even in the behavior of individual particles. Here, Wiener's "receptor," "comparator," and "effector" are fused with the very fabric of reality. The Pauli exclusion principle, for instance, determines the particular structure of matter. Every electron in an atom is described by a set of quantum numbers — a kind of "address" specifying its orbit, energy, and spin direction. The Pauli principle forbids two electrons from sharing the same address.

Suppose two electrons are pushed toward the same state with identical quantum numbers (Fig. 3). The moment an external force — say, the colossal pressure inside a dying star — tries to "squeeze" a particle into an already occupied state, the system instantly responds with a counterforce. The system's wave function "senses" the violation of a fundamental symmetry and generates what is known as degeneracy pressure. The outcome of the process is directed at compensating the disturbance (the attempt at compression), which prevents the collapse of atoms and allows solid matter to exist. The system corrects its state because any other behavior is forbidden by the geometry of space itself. And every electron obeys this principle.

Strictly speaking, there is no separate "sensor" or "comparator" from Wiener's schema here — there is a fundamental symmetry prohibition that makes certain states impossible. This is more of a metaphorical extension of the concept of reflection: correction is built into the physics itself rather than arising as a superstructure. Nevertheless, the result is the same — the system returns to an allowed state after a disturbance, and it is precisely this functional pattern that links the quantum level to the other rungs of the ladder.

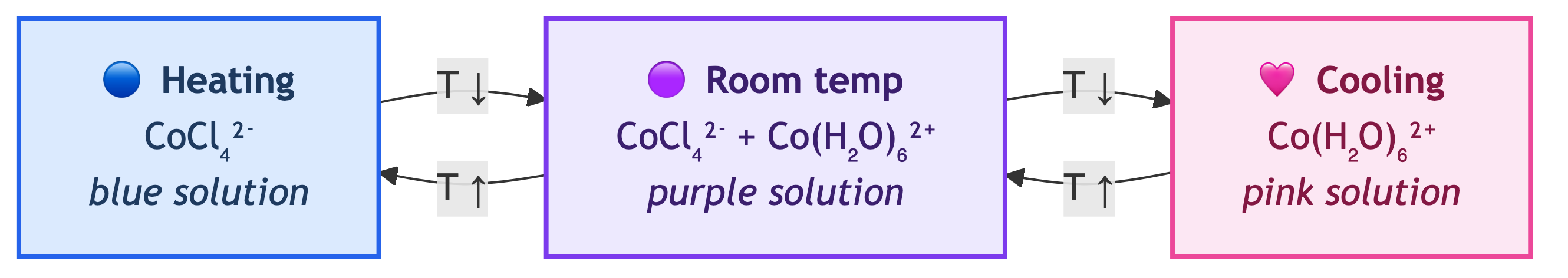

Statistical reflection: an ensemble of particles. When the number of particles grows large, a new level of reflection emerges — a statistical one. A striking manifestation of statistical reflection at the inorganic level is Le Chatelier's principle: a chemical system at equilibrium, when subjected to a disturbance, shifts so as to partially compensate for it. A vivid example of such "natural reflection" is the classic cobalt chloride experiment. Suppose a solution contains two ions in equilibrium (Fig. 4):

The outcome of the process (release or absorption of heat) is directed at compensating the cause (the external temperature change), and the system does this on its own, without sensors or wires. In essence, this is a property of thermodynamics — a consequence of Gibbs free energy minimization. Note that for a single molecule, the concepts of "equilibrium" or "pressure" do not exist. Reflection emerges only when the number of particles is sufficiently large.

Thus, even at the level of inanimate nature, self-correction mechanisms exist — without cells, without neurons, without intention. But such systems have only a single loop: they respond to a disturbance without "remembering" previous ones.

Level 3. The Cell: A Hierarchy of Loops

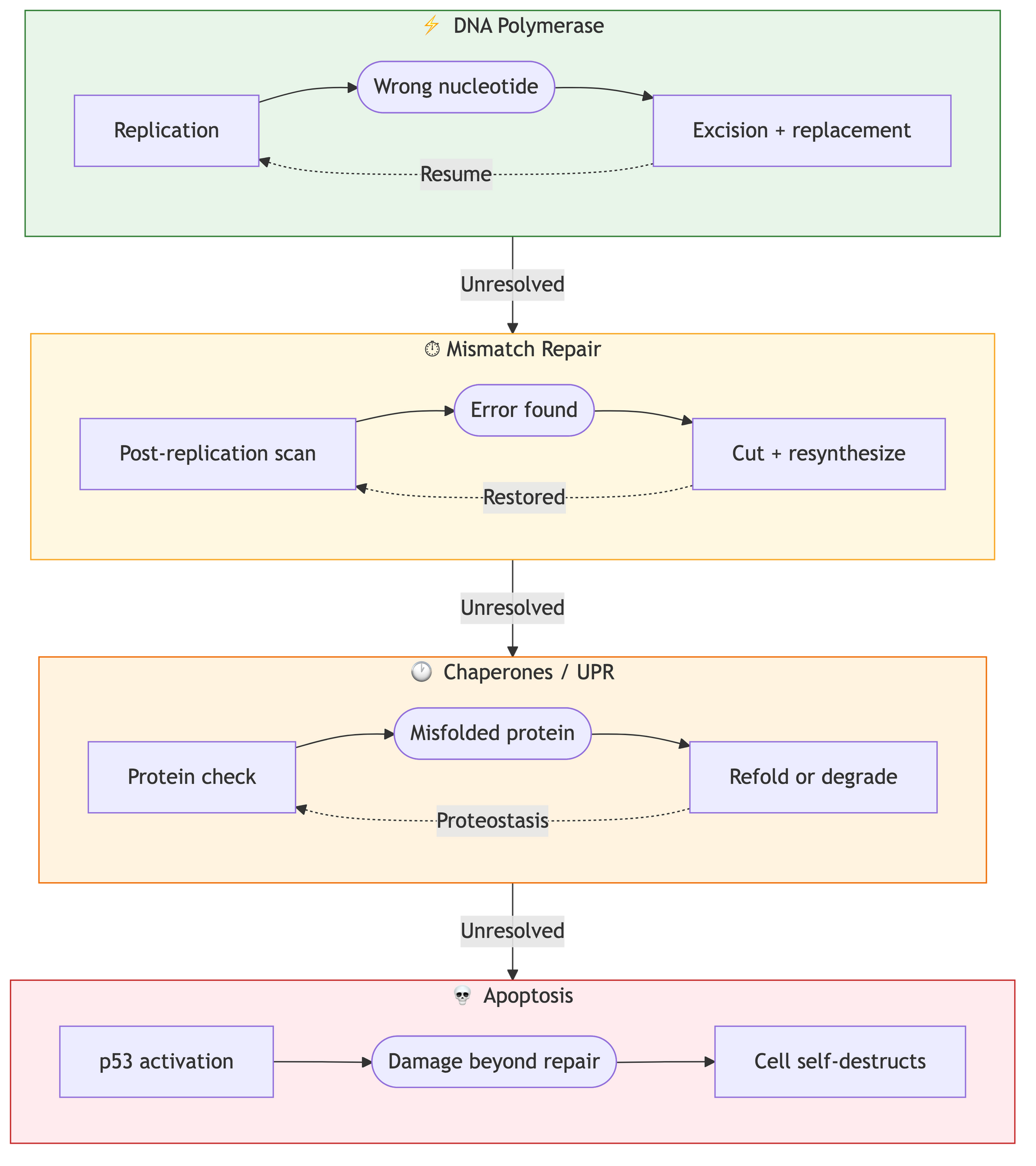

The cardinal distinction of biological systems is the multi-level nature of their reflection. Even a single cell is not one feedback loop but an entire hierarchy of nested loops, where each successive one activates only when the previous one has failed.

DNA polymerase, for example, works like a typewriter with a built-in eraser: if it places the wrong "letter," it physically cannot proceed until it "erases" the error and replaces the nucleotide with the correct one (Fig. 5). If this instant self-check fails, the next tier of quality control kicks in: specialized editor proteins re-examine the finished text. At the whole-cell level, chaperones attempt to refold misshapen proteins. Finally, in a critical situation where accumulated errors threaten the organism, the system makes a final reflective decision — triggering apoptosis (self-destruction) to prevent the buildup of chaos.

The fundamental difference between living and inanimate matter is the emergence of memory and composite hierarchy. Chemical equilibrium does not remember past disturbances; a cell does — and builds defenses accordingly. Reflection ceased to be a one-off reaction and became a multi-layered process. Yet the cell is still unable to evaluate the quality of its own evaluation. That leap occurs at the level of the organism.

Level 4. The Organism: From Body to "Knowing What You Don't Know"

Proprioception is a basic example of reflection at the organism level. The brain predicts the sensory outcome of a movement, compares the prediction with the actual result, and immediately makes corrections. Imagine you are about to lift a large box that you believe is packed full of books.

- Prediction: The brain calculates the required effort in advance and commands the muscles to brace for a 15 kg pull.

- Reality: The box turns out to be empty. The moment you begin the motion, your arm shoots upward far faster than expected.

- Comparison (prediction error): At this point, the "proprioceptive sensor" (receptors in muscles and tendons) registers a massive mismatch between plan and reality.

- Correction: The brain instantly, within fractions of a second, "reflects" on this error and sends a braking signal so you don't smack yourself in the face with the box.

This cycle of "Prediction — Comparison — Correction" runs thousands of times per second: when you walk on uneven ground, type without looking at the keyboard, or try to touch the tip of your nose with your eyes closed. There is no conscious deliberation here — it is a pure cybernetic loop in which movement self-corrects during execution.

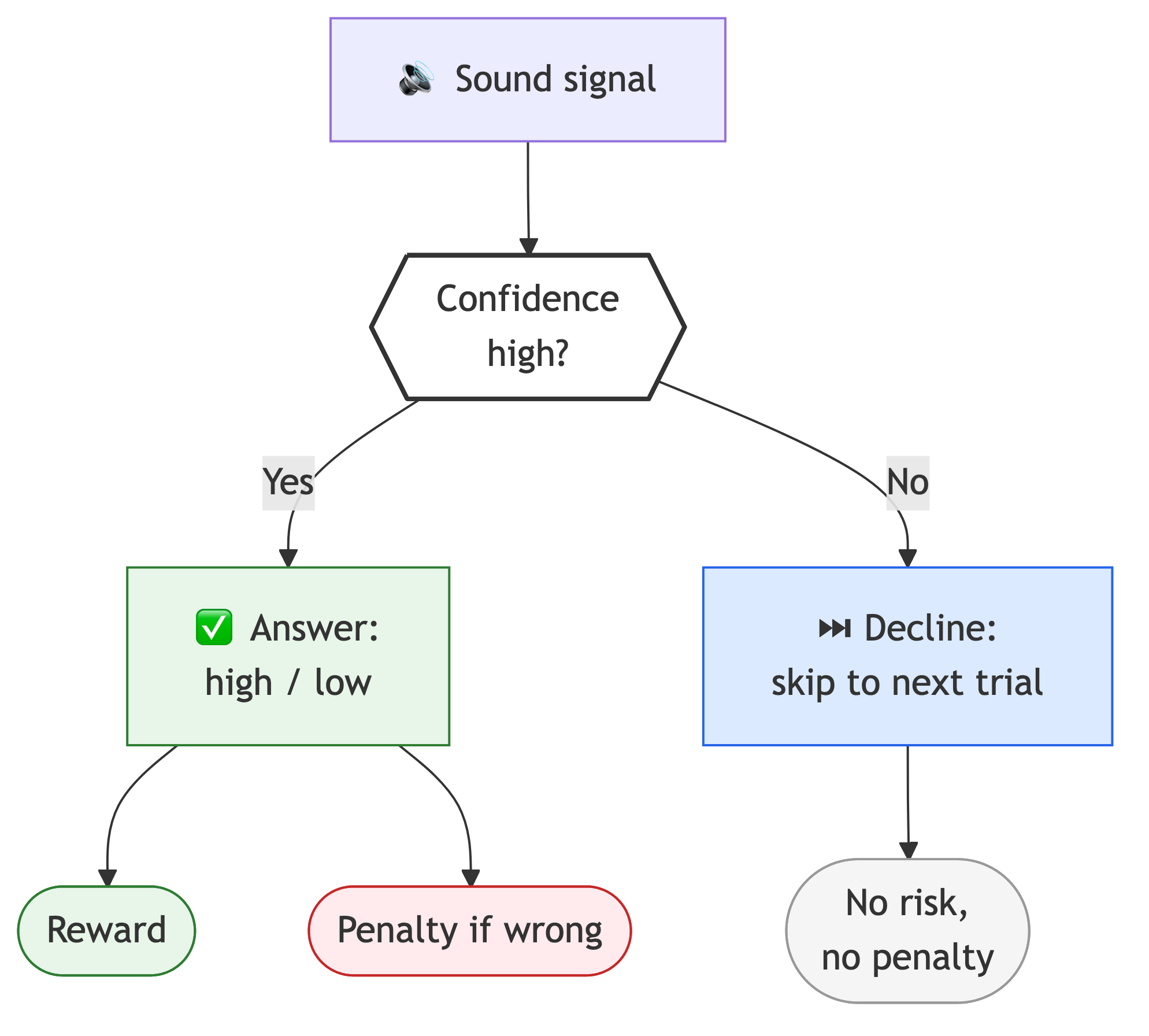

Metacognitive reflection: "Do I know what I know?" Proprioception is reflection of the body. But organisms are capable of something more: evaluating not just the external signal, but the reliability of their own processing of that signal. This is a qualitative leap — reflection upon reflection.

In experiments by J. David Smith (Smith et al., 1995) with bottlenose dolphins, the capacity of mammals to monitor their own uncertainty was demonstrated. A dolphin was asked to distinguish between sound tones of different frequencies (Fig. 6). For correctly identifying the higher-frequency source, the dolphin received a reward; for an error, a temporary penalty — a delay before the next trial. The researchers then introduced a third option: the ability to "decline" the trial. It turned out that when the frequency difference became critically small and the task was objectively difficult, the dolphin preferred to press the decline button, avoiding the penalty and moving to the next round. This is how the internal reflective loop works: the animal evaluates not just the external signal, but the reliability of its own brain's processing of that signal, choosing a strategy that minimizes risk.

Analogous results have been obtained in studies of rhesus macaques (Middlebrooks & Sommer, 2012, Neuron; Hampton, 2001, PNAS). Monkeys were given a visual memory task and then had to choose the size of their reward: a guaranteed small prize or a large prize with the risk of total loss if they were wrong. Macaques chose the high-risk option only when their memory gave a clear answer, and switched to the conservative strategy — a bird in the hand — when their internal model flagged gaps in the data. Studies of neural activity showed that at this moment, a specific "confidence prediction error" signal forms in the prefrontal cortex and anterior cingulate cortex of the macaque brain — effectively a biological analogue of a metacognitive comparator.

Deferred reflection. Biological reflection does not cease when consciousness switches off; during sleep, it shifts into a mode of deep systemic optimization. During slow-wave sleep, memory consolidation takes place: the hippocampus begins replaying the day's accumulated experience to the neocortex at an accelerated pace. In essence, this is a reflective "replay" of events to separate the important from the incidental. The brain analyzes the day's prediction errors and updates its internal proprioceptive and cognitive models, freed from the distraction of incoming sensory input. This "audit" allows the system to resolve information conflicts and restructure long-term memory, transforming chaotic experience into ordered knowledge.

In summary, the complex hierarchy of cells within an organism adds new reflective loops on top of the reflection of individual cells: prediction (proprioception), evaluation of one's own confidence (metacognition), and optimization of reflection itself (sleep). None of these properties requires consciousness — the dolphin does not reflect on "what it is like to be a dolphin"; it simply presses the decline button when it is not sure.

Level 5. The Swarm: Distributed Reflection Without a Reflector

In distributed systems, reflection is implemented through external interaction markers of three types: material traces in the environment, coordination signals, and persistent records of collective experience.

Stigmergic reflection. In this framework, coordination arises through the environment — a mechanism known as stigmergy: the trace of one agent's action becomes a guide for others. In ants, these external markers are pheromone trails; the colony's memory is, in a sense, externalized into the environment in active form. An individual ant acts chaotically and possesses no global plan, but its interaction with pheromones creates a feedback loop: the shorter the path to food, the more frequently ants traverse it per unit of time, and the stronger the chemical signal becomes for the rest of the colony.

Pheromone evaporation serves as an "error filter" (discarding suboptimal routes), while accumulation serves as "reinforcement of successful solutions." If an obstacle appears on a path, the system responds to the "stress" with an instant redistribution of traffic: the old trail fades and the detour strengthens automatically, with no centralized command. The colony reflects changes in the landscape, using itself as a computational network.

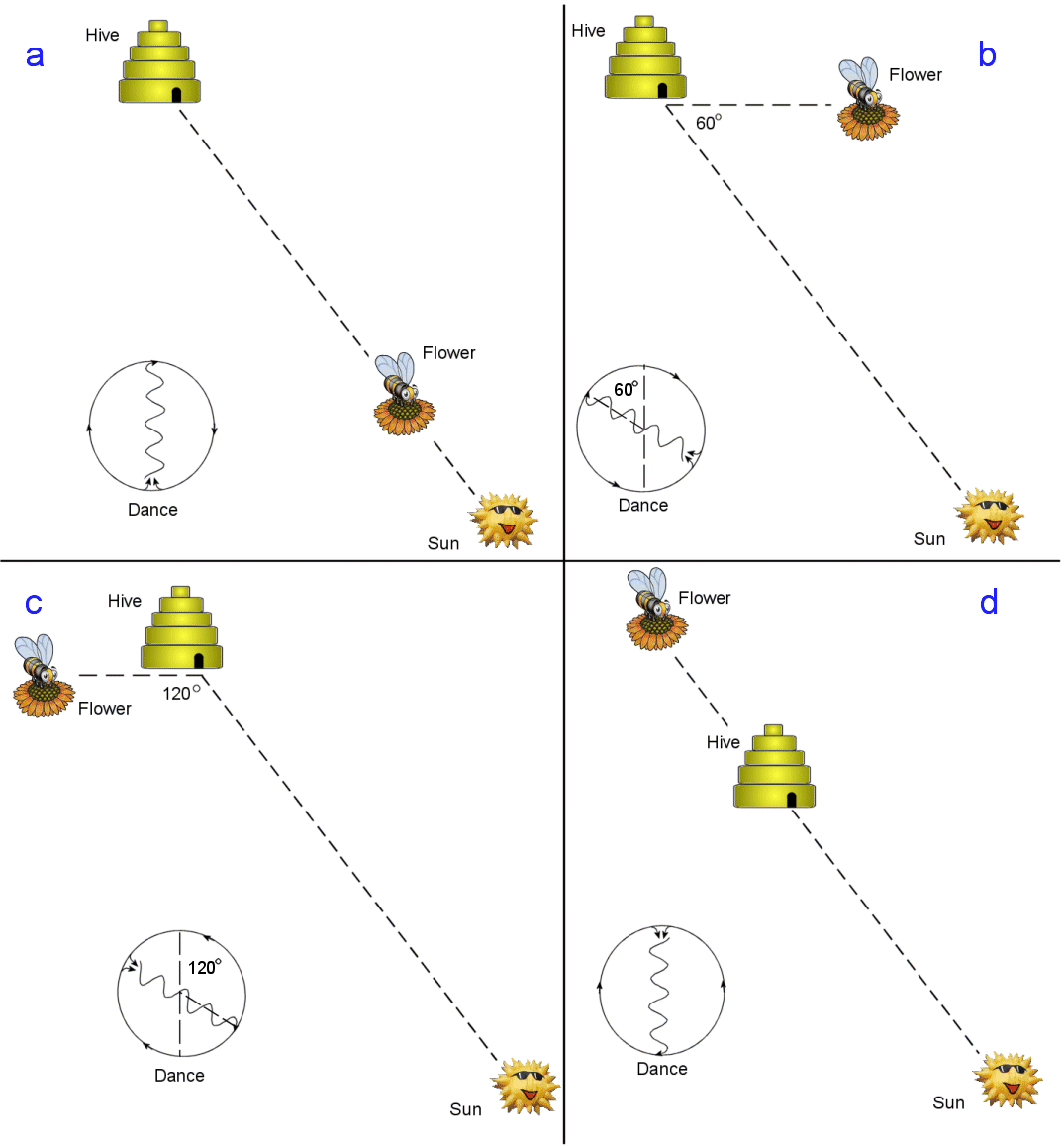

Collective skepticism and independent verification. In a honeybee colony, the self-correction mechanism reaches a new level of sophistication through the combination of collective signaling and individual experience. When a scout bee returns to the hive and signals a foraging site by performing the "waggle dance," other bees do not simply copy her behavior.

- Individual filter: A recruit bee reads the coordinates from the scout's dance, but before relaying this signal further, she must personally fly to the site and assess the quality of the resource.

- Correction through comparison: If the nectar turns out to be scarce or conditions at the site have changed, the recruit will not dance upon returning. The signal about a poor route simply fades, receiving no confirmation.

- Mathematical robustness: Thanks to this "independent verification," the swarm is protected from cascading errors. The system reflects reality through thousands of individual filters, allowing the colony to select the best food source from dozens available.

Epistemic stigmergy. In human culture, the scientific method performs the same function as pheromone trails or bee dances — it is a protocol of interaction that transforms the chaotic activity of individual researchers into a self-correcting process of knowledge production. Scientific papers are the analogue of chemical pheromones — "markers" in the environment that point other agents toward promising directions. Citation works like pheromone accumulation: the more frequently a result is reproduced and cited, the "stronger" that route becomes in the landscape of knowledge. The specialization of scientists allows the system to process vast datasets in parallel: thousands of labs, like scouts in a swarm, probe different hypotheses simultaneously, requiring no centralized command.

In such swarm systems, there is no unified consciousness. There is distributed reflection without a reflector — and it works. Our consciousness immediately interpolates an agent: "nature decided," "evolution optimizes," "the model understands." This is convenient language but poor ontology: the feedback loop operates without the intention of any subject. In this respect, swarm systems resemble the molecular systems we examined earlier when discussing Le Chatelier's principle.

Up to this point, each new level of complexity has yielded deeper reflection. A particle resists disturbance. A molecular ensemble shifts its equilibrium. A cell proofreads its own work. An organism predicts the consequences of its own actions and evaluates its confidence. A collective optimizes routes and knowledge. One might think the trend continues without limit: keep increasing complexity, and reflection will keep deepening. But this ladder has a ceiling.

The Meta-Level. Recursive Self-Observation and the Limits of Control

Second-order cybernetics: "The observer within." If classical cybernetics (Wiener) studied how a system controls an object, second-order cybernetics (Heinz von Foerster, 1979; Heinz von Foerster, 1991) focuses on systems that include the observer: the system manages not only some correctable parameter but reflects on how it itself observes and makes decisions. The next step follows naturally — if the observer is part of the system, one needs to formally describe what remains stable in recursive self-observation and is recognized as "the same." In von Foerster's framework, this role is played by the concept of eigenforms.

Eigenforms (Heinz von Foerster, 1976) are the "proper forms" of a system that emerge from recursive processes. Infinite application of certain mathematical functions to themselves ($f(f(f(...)))$) stabilizes them at a particular value — the mathematical equivalent of a stable "self." A biological example of such a mechanism is vision: we do not see a "picture" but rather the result of the brain's recursive verification of what it expects to see. The system "reflects" on its expectations until they converge with the signal — this is demonstrated, for instance, by experiments with inverting goggles (George M. Stratton, 1896; George M. Stratton, 1897): when worn continuously, the brain gradually adapts and "flips" the image back.

At the software level, reflection manifests through an algorithm's ability to treat its own code as data. The mathematical embodiment of this principle is quines — programs that, when executed, output their own exact source code. The existence of quines proves that self-reference is not a logical error: any sufficiently complex system can contain within itself a complete set of instructions for assembling itself, much as DNA contains the blueprint for a cell capable of copying that very DNA.

Von Foerster's practical insight is that reflection is not merely a system's "internal commentary" about itself, but rather a practical mechanism for stabilizing invariants through recursive self-checking. Therefore, for complex systems (including LLMs), it is important to evaluate not only the accuracy of an answer, but also which stable forms the system preserves upon repeated checking and how it restructures them when an error occurs.

The computational mechanism: the reflective tower. If eigenforms describe the stable outcome of recursive self-observation, in programming such a mechanism is typically modeled through a hierarchy of interpreters, where each upper level treats the one below not as "external data" but as its own modifiable object. In other words, this is the recursion of an observer over an inner observer: an interpreter can interpret and rewrite the interpretation rules of the previous level. Ascending this "tower" of reflection, a program (for example, the metacircular evaluator in Lisp; see Harold Abelson, Gerald Jay Sussman, Julie Sussman, 1996) can modify its own logic during execution. This is the digital analogue of DNA polymerase's built-in "eraser": the system detects an inefficiency in the base protocol and dynamically corrects it, turning reflection into an instrument of fundamental self-modification.

The practical consequence for development: a mature system should have not only a "working" layer but also a meta-layer of self-observation and self-correction. In engineering terms, this means observability, automated checks, canary releases, rollback, and loops like generate → critique → revise in LLM systems. In other words, a product's robustness is determined not by the absence of errors but by the architecture's ability to detect and fix its own errors before they become systemic.

The logical poison: Gödel's theorems. In 1931, Kurt Gödel mathematically demonstrated the limits of self-reflection. To grasp the essence of Gödel's idea in simplified terms, imagine a "Perfect Logic Textbook" that claims to have answers to all questions. Gödel proved that if this textbook is thick enough to describe itself, one peculiar page will inevitably appear in it.

Imagine we read the following statement on that page:

"This statement cannot be proven by the rules of this Textbook."

Let us try to verify this statement using only the Textbook's own logic:

- Suppose we manage to prove it. But the statement itself says it cannot be proven. So the Textbook has proven a falsehood. Conclusion: The Textbook is broken (inconsistent).

- Suppose we cannot prove it. Then it turns out the statement is true! (After all, it claimed exactly that — that it cannot be proven.)

In other words, in any sufficiently powerful formal system of logic, one can construct a statement that asserts its own unprovability. If the system proves it, the system is inconsistent; if it does not, the system is incomplete. To fully "understand" the system, one must step outside it into a meta-system. But there, the same problem repeats.

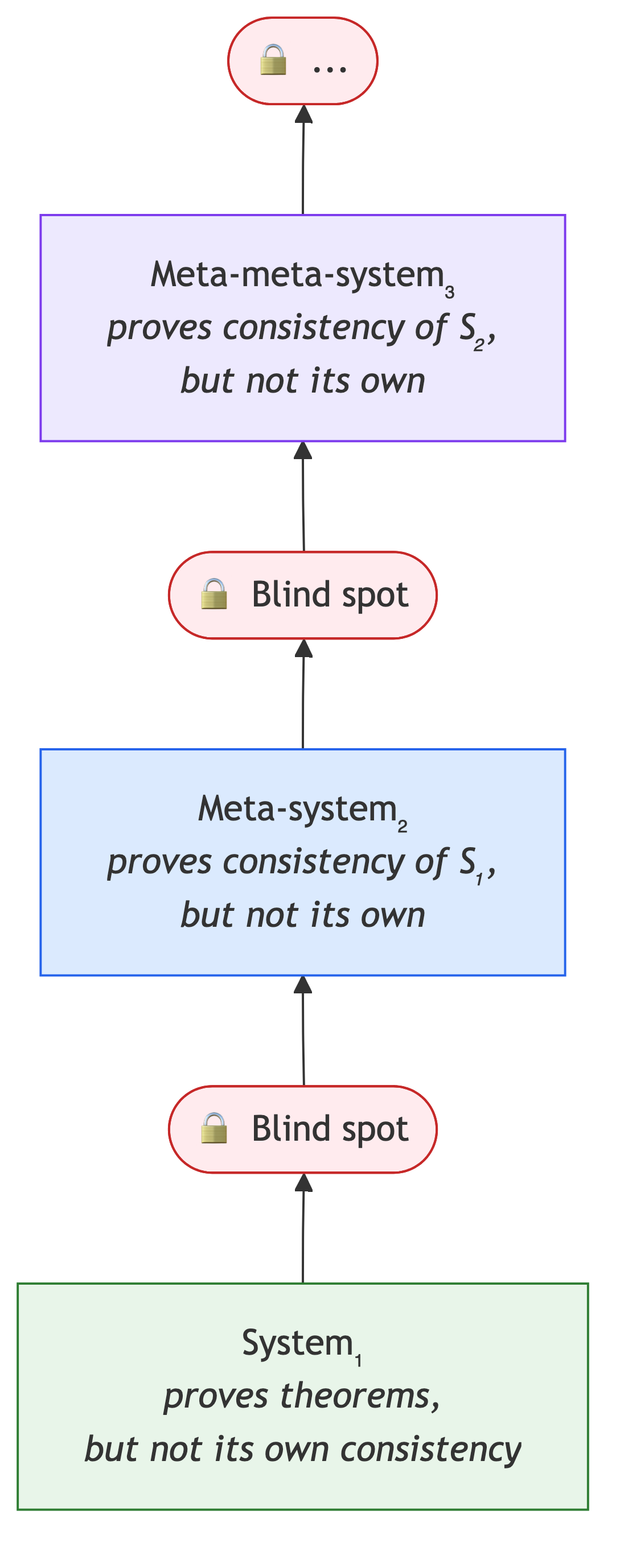

Gödel's two incompleteness theorems establish a hard epistemological limit for any complex system: complete self-knowledge from within is technically impossible. The first theorem states that in any sufficiently powerful formal system, there will always be true statements that cannot be proven by the system's own means — meaning "truth" is always broader than "provability," and the system will never contain the full totality of knowledge about itself. The second theorem is even more devastating, asserting that a system cannot prove its own consistency while remaining within its own rules. To verify the absence of internal errors and logical dead ends, the system must ascend to a meta-level and examine itself "from outside" — yet that external vantage point itself becomes a new system with the same blind spots (Fig. 9).

Turing demonstrated the computational analogue of Gödel's limitation in the world of programs (Alan M. Turing, 1936/1937). Suppose we have a "perfect predictor" that, for any program, tells us in advance and without error whether it will halt or loop forever. Then one could write a paradox program that does the opposite: if the predictor says "halts," it enters an infinite loop; if it says "loops," it halts immediately. If we run this program on itself, any answer the predictor gives will be wrong. Therefore, a universal and infallible halting test cannot exist in principle.

So reflection has a fundamental limit: a system can correct itself, but it cannot obtain complete knowledge of its own behavior from within alone. In formal logic, this manifests as incompleteness (Gödel); in computability theory, as the undecidability of the halting problem (Turing). But this does not make reflection useless — merely an open, never-ending process.

Implementing Reflection in Large Language Models

In modern large language models, the self-attention mechanism is often mistakenly equated with digital reflection. At each layer of the Transformer architecture, every token does indeed "look back" at the entire preceding context, mathematically weighting the relevance of each word. The model appears to evaluate what has already been written, selecting the most accurate continuation. However, unlike a living cell or a brain, this process lacks the essential attribute of reflection — a closed feedback loop.

New tokens have no effect on previous ones; information flows strictly in one direction, from input layer to output layer. The standard architecture lacks recurrent loops or internal control mechanisms that could compare the result with an expectation and send a corrective signal back into the system before the next word is generated. If DNA polymerase can "erase" an error and a bee can "change its mind" after verification, a transformer simply computes the most probable next step. This is static context analysis, not dynamic self-correction. In this sense, modern LLMs are vast "prediction machines" that simulate consequences but do not control causes.

Decorative post-reflection. Modern machine learning engineering is shifting from "fast thinking" architectures (System 1, per Daniel Kahneman, 2003) toward "slow thinking" systems (System 2) capable of internal delay and verification. If the classic transformer is a straight line, new approaches try to bend it into a loop. One popular approach to overcoming this linearity is the chain-of-thought technique: the model is made to "think aloud." Each newly generated reasoning step enters the context window. Subsequent layers of the network see the model's earlier "thoughts" as part of the input. This creates the simulation of an internal comparator: the system gains the ability to correct course based on its own intermediate conclusions. However, post-reflection is a fragile way to add iterativeness. A 2025 study by Anthropic (Yanda Chen et al., 2025) showed that when using such mechanisms, the neural network tends not so much to reflect as to rationalize — fitting an explanation to a decision already made.

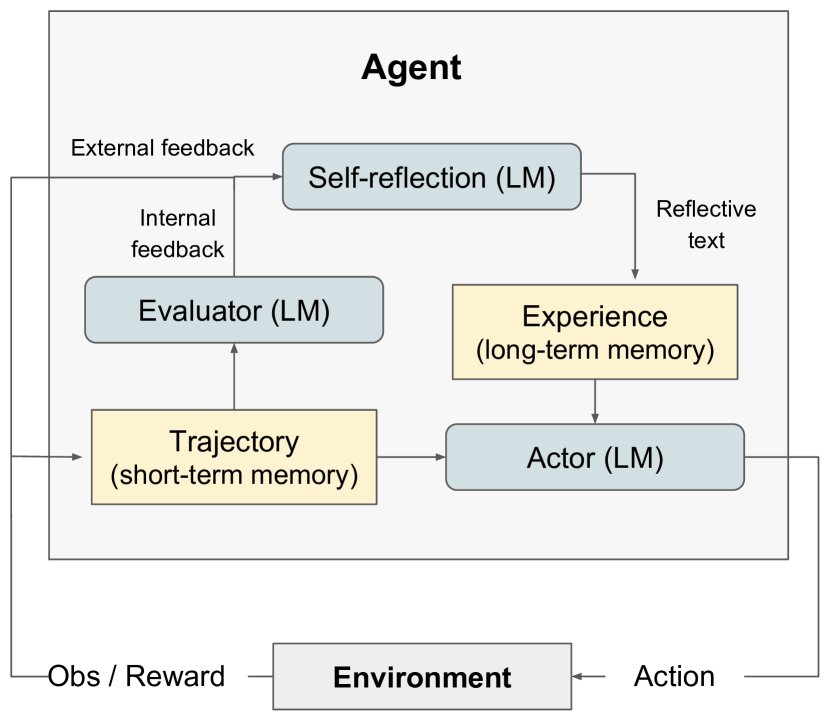

Swarm reflection through human evaluators. Between post-reflection at the inference stage and self-correction at the training stage lies an intermediate loop that directly mirrors the swarm logic from the previous section. In RLHF (Reinforcement Learning from Human Feedback; Long Ouyang et al., 2022), human evaluators play the role of recruit bees: they receive the model's "dance" (a pair of responses), personally assess the quality of each, and vote for the better one. From thousands of such individual assessments, a reward model is constructed, which then guides training. As in a bee colony, collective skepticism is at work: no single evaluator determines the direction alone, and cascading errors are dampened by the independence of judgments. An extension of this idea is RLAIF (RL from AI Feedback), where the role of "recruits" is taken by another model, closing the loop within the machine system. This is the largest-scale reflective loop in modern LLMs: it is slow (update cycles take weeks), but it is what shapes the model's baseline behavior.

Reflection at the training level. If post-reflection in "think aloud" mode often leads to rationalization, the next step is to move self-correction from the inference stage into the training stage itself. This idea is realized by the SCoRe method (Aman Kumar et al., 2024), where the model is trained not only to produce an answer but to correct its own errors across successive attempts. The key difference from the chain-of-thought approach is that corrective behavior becomes part of the model's weights rather than a transient effect of a clever prompt. This shifts the focus from "explain your solution" to "improve your solution": the system must increase the quality of its second attempt relative to the first, rather than simply producing an elegant justification for its initial answer.

However, feedback in such a mechanism is both slow and expensive: first the model makes an attempt, then a correction, then a reward is computed over this chain, and only then are parameters updated. This makes training slower and less stable than conventional methods, and introduces a quality-versus-cost tradeoff at inference time. Additional self-correction iterations yield better answers but increase latency and expense.

Reflection at the architecture level. While training attempts to embed self-correction in the model's weights, the architectural approach changes the very geometry of computation. Instead of a unidirectional pass from input to output, researchers introduce recurrent loops (e.g., in Mostafa Dehghani et al., 2018, Universal Transformers): the model can return to an intermediate state and decide for itself how many iterations a task requires. In such a design, "thinking time" becomes a manageable resource rather than a fixed number of layers. This directly echoes the section on dolphins and macaques: when confidence is low, the system opts not for the "fastest answer" but for a more cautious strategy, increasing verification time or reducing error risk.

The problem is that such architectures align poorly with the economics of modern hardware. Standard transformer training maps well onto GPUs with straightforward parallelization, whereas sequential recurrent steps introduce noticeable speed and cost penalties. In other words, "honest" architectural reflection often proves too expensive at scale.

Hence the interest in hybrid compromises: what the industry needs is not the "perfectly reflective" model but one that is "smart enough and fast enough." Architectures like Mamba (Albert Gu, Tri Dao, 2023) and RWKV (Bo Peng et al., 2023) address a common practical challenge: avoiding the need to recompute the entire preceding text at each step, as a classic transformer does. Instead, the model maintains a compact "working state" and updates it as tokens are read. This allows processing longer inputs within the same budget and lowers inference costs. The downside is that on certain tasks quality may be less consistent, and the ecosystem around these models is still thinner: fewer ready-made tools, proven recipes, and operational practices than for classic transformers.

This is precisely why the industry has massively shifted toward procedural simulation of architectural reflection at the inference stage. The model uses its own intermediate text as temporary working memory, re-reads it, and corrects course. This is a practical compromise: the architecture remains nearly linear, but behavior becomes iterative through additional computational steps.

Conclusion

Consciousness includes reflection, but reflection requires neither consciousness, nor intention, nor subjective experience. A closed feedback loop is sufficient. This is why it works even where there is no "inner observer" in the human sense: amid noise, uncertainty, and complex signals. Thus a ladder of increasing sophistication emerges: from a single-loop response to hierarchies of loops, from reacting to disturbances to prediction, from prediction to evaluating one's own confidence, from individual correction to distributed self-organization.

Against this backdrop, the gap in modern LLMs becomes especially visible. The classic transformer architecture remains linear: the pass goes forward, with no built-in self-checking loop. What looks like "reflection" in commercial models is more often implemented procedurally: the model verbalizes intermediate steps and then reads them back as new input. This is a workable engineering trick, but it is still not architectural reflection — it is its operational simulation.

The practical takeaway, however, is powerful: systems without intention can solve problems no less effectively than "intentional" systems, provided the task is specified cybernetically — through a goal, an error signal, and a corrective loop. And reflection remains the only property traditionally associated with consciousness that we already know how to design, measure, and scale. That is why the current research program is not metaphysical but engineering: not "how to engender experience" but "how to make the loop more robust." The more complex the task, the more nested loops of checks and balances the system requires; no additional "metaphysical layer" is needed for this.